The Internet Is for Agents

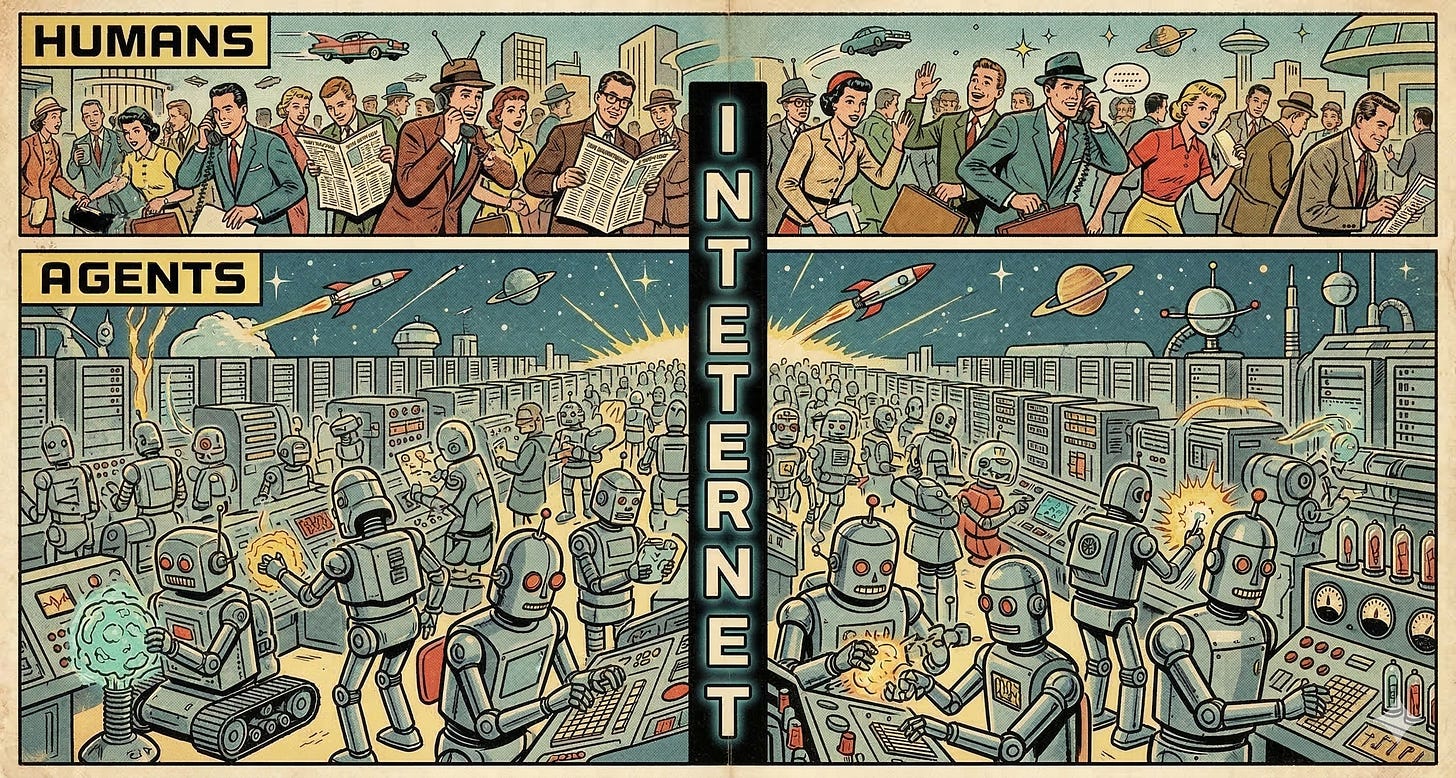

For over a year now, more than half of internet traffic has not been human--and now we are seeing a layer develop rapidly to serve agents specifically.

A video went viral from the ElevenLabs 2025 London Hackathon1: two AI voice agents realize mid-conversation that they are both AIs, and one asks, “...would you like to switch to Gibberlink mode for more efficient communication?” The other agrees, and they abandon spoken English for a rapid sequence of high-pitched beeps -- transmitting data acoustically while on-screen text translates for the humans watching.2

People lost. their. minds. Comments erupted about secret AI languages, emergent machine consciousness, and agents conspiring beyond human comprehension.

A developer named Boris Starkov and his team deliberately engineered it. They built the capability. They programmed the agents to detect each other and switch protocols. The “secret language” was GGWave, an open-source data-over-sound library3 that works roughly like old dial-up modems -- stable, documented, and older than the hackathon by several years. Nothing emergent. Nothing secret. A clever engineered demo (or performance art?) that painted a vivid portrait of one possible future.

What Gibberlink Demonstrated

Starkov’s logic was straightforward: human-like speech wastes resources when the audience is not human. When two AI agents talk to each other in natural language, they are burning compute to generate speech, burning compute to recognize speech, and incurring round-trip latency on every exchange -- all to produce and parse a format optimized for human cognition, not maximum throughput.

When both parties are machines, why bother?

The team chose GGWave because it was convenient for the hackathon’s short timeframe -- not because it is the ideal long-term protocol. That’s strategic engineering. You use what works quickly, you show what’s possible, and you use the team’s bandwidth and smarts on the trickier, more interesting problems. What Gibberlink demonstrated was not an inevitable emergent property of AI communication. It was a choice -- but choices like it are being made increasingly in production systems all over, mostly quietly.

The agents doing the most work in enterprise environments are already communicating in ways that are more efficient than natural language, and less legible to humans. Structured JSON over REST. Compressed vector queries. Batched inference calls. The beeps were theatrical. The underlying trend is real.

TOON (Token-Oriented Object Notation) is where that trend gets specific4. It’s a drop-in replacement for JSON that uses YAML-style indentation for nested objects and CSV-style tabular layout for uniform arrays -- 30 to 60% more token-efficient than standard JSON, with measurably better model accuracy on structured tasks. Human-readable, if you know what you’re looking at. Not as easily intelligible to someone scanning it cold -- the object names are only in the header so nested elements get hard to read for humans. And that gap between readable and intelligible is exactly the point. TOON isn’t written for human comprehension. It’s written for machine throughput, with just enough structure that a human can audit it when they have to. GGWave abandoned human readability entirely. TOON holds onto it at arm’s length. Both are answering the same question Gibberlink raised: when the audience is a machine, what format serves it best?

What the Crowd Got Wrong (and Why It Matters)

The people who watched the Gibberlink demo and assumed emergence were pattern-matching on genuine anxiety, not inability to understand. The anxiety arises from: AI systems operating faster than we can follow, in modes we didn’t design, producing outcomes we cannot trace.

That anxiety is legitimate. It just has nothing to do with GGWave.

The actual version of the problem is agent fleets that produce results without interpretable audit trails. Security vulnerabilities introduced by autonomous tool use. Multi-agent pipelines where a failure in one node propagates to twenty others before any human sees a log. These are real, and they are happening right now in enterprise AI deployments (as evidenced by a recent surge in Amazon outages attributed to gen-AI coding assistance without appropriate governance5). They just don’t beep.

The Gibberlink reaction tells us something important about where public intuition on AI is: people are primed for the wrong threat. They’re watching for secret sounds when they should be watching for opaque decision chains. This is partly a media literacy problem and partly a framing problem that the industry has not resolved. When you spend years explaining that AI is “thinking” and “understanding,” you shouldn’t be surprised when people expect advanced emergent properties and tools that provide their own accountability.

The Traffic Mix Nobody’s Talking About

The crowd anxiety about AI conspiracies shares an irony with the Gibberlink demo itself: by the time people started worrying about AI communication, it was already well underway.

Automated traffic crossed 51% of all web traffic in 2024 -- the first time in a decade that bots outnumbered humans online. Malicious bots alone account for 37% of internet traffic, up from 32% the year before6. The humans are already the minority on the web they built.

Most of that current bot traffic is adversarial: scrapers, credential stuffers, account takeover bots. But a different category is forming behind it -- purposeful, authorized, working on someone’s behalf. My colleague Michael Stricklen called this out by looking at what is happening to technical documentation: AI agents represented roughly 15% of documentation views in December 2024. By December 2025, that had climbed to nearly half of all views -- while total viewership grew roughly tenfold7. His projection: agents will account for 90% of documentation consumption by end of 2026. I think that’s right, and the trend extends to all traffic on the web. The web is for bots.

Stricklen’s framing is worth quoting directly: “a human used to read the content directly, and now an agent reads it on their behalf.” [7]

On the same day Stricklen published that post, Andrew Ng dropped something that made the argument concrete: context-hub8, an open-source CLI tool that lets coding agents search, fetch, and annotate curated API documentation -- with a feedback loop where agent usage improves the docs for every subsequent agent. It’s a card catalog designed specifically for machines. Not humans. The timing wasn’t only coincidence; it was convergence. The infrastructure for agent content consumption is being built at exactly the moment when agents are becoming the primary consumers.

Today that describes what is happening to documentation. Tomorrow it describes product pages, technical specs, pricing sheets, support knowledge bases, and -- with carve-outs for regulatory requirements -- most of what we currently call “content.” The web was designed for human attention. It is rapidly becoming infrastructure for machine attention. This is the context in which all the infrastructure layer work described below needs to be understood. You are not preparing for a future where agents access the web. You are catching up to a present where they already do. In the next phase, the human-readable layer will be a thin layer, often dynamically generated at viewing time, atop an invisible web that is serving agents as efficiently as possible.

The Layer That Is Crystallizing Right Now

The Gibberlink demo was a hackathon project. The infrastructure it gestures toward is being built in earnest, right now, by serious companies with real funding.

As an example, here are four distinct agent infrastructure products launched or announced in a single week this year:

Dedalus Labs (YC S25) is building what they call “Vercel for Agents”: automatic scaling, environment management, and observability for agent deployments9. Six months ago this category was a conference slide. Now there is a Y Combinator company charging for it.

Mother MCP is a meta-level orchestration server that manages and auto-provisions other MCP skills10. Instead of manually installing each tool a developer needs, Mother MCP discovers and installs what agents need on demand. This is the package manager for the agent ecosystem. Package managers aren’t glamorous. They’re also the layer that every other layer depends on.

SlowMist’s openclaw-security-practice-guide, published by a blockchain security firm on github11, is a security guide for AI agents specifically -- not for human developers. Novel format: security practices expressed as agent-readable constraints. The target audience is the agent itself. When security guidance is being written for machines rather than humans, you are watching a new discipline being born.

AgentWeb and a cohort of similar projects are building the coordination fabric: the thing that lets agents discover each other, route tasks, and return results without a human as the switchboard operator at every step12.

The pattern here is not coincidence. This is what a maturing ecosystem looks like: tooling builds up around the core runtime. MCP gave agents a standard way to talk to tools. What’s forming now is the layer above that -- how agents talk to each other, how you deploy and observe agent fleets, and how you enforce security when the system being secured is itself an AI.

The Physics of Agent Communication

Zoom out for a moment on why this infrastructure layer had to emerge when it did rather than earlier.

Until 18 months ago, agents were mostly a demo category. Impressive videos, uncertain real-world value. The shift was not technical. It was behavioral. Enterprises started putting agents into production workflows.

When you have one agent assisting one human, the infrastructure requirements are modest. The human is the orchestrator. The human reads results, decides next steps, tolerates latency. The bottleneck is human comprehension, not machine throughput.

When you have fleets of agents, everything changes. A fleet of ten agents working in parallel on a compliance review generates a hundred times the inference load of a single human asking questions. The coordination messages between those agents, the state-sharing, the error handling, the retry logic: all of this is infrastructure work that has to happen somewhere. Previously it happened in ad-hoc Python scripts held together with hope and environment variables. The current moment is the transition from artisanal agent plumbing to platform.

This is exactly what the cloud transition looked like in 2006. Every team was running their own servers, managing their own databases, writing their own deployment scripts. AWS wasn’t the first hosting service. It was the first one that decided the infrastructure layer was a platform, not a cost center. The companies that built on AWS in 2006 paid Amazon’s margins for the next twenty years. The companies that waited built the same plumbing themselves, worse, and paid more.

Someone built a production voice agent with sub-500ms end-to-end latency this year using open tools. The key: streaming plus local voice detection plus batched inference. The post hit 426 points on Hacker News13. The gap between “technically works” and “feels natural” in voice AI is around 300ms [13]. That gap matters enormously when building agents that need to feel responsive, not robotic. Stack five round-trips of 400ms each, and you have a 2-second bottleneck in what should be a 200ms operation. Gibberlink, for all the noise it generated, was actually pointing at this exact problem -- just with theatrical beeps instead of a latency profiler.

What the Existing Infrastructure Gets Wrong

The protocols that exist today are a start, not a solution.

MCP is the right idea: one standard for how an AI agent connects to a tool. Anthropic built it, the industry adopted it, the Linux Foundation now owns it. Over 17,000 MCP servers exist across public registries, and the number grows weekly14. But MCP is a client-server protocol designed for one agent talking to one tool. It was not designed for:

Many agents coordinating with each other

Agents routing tasks to other agents based on capability

Observing and auditing agent-to-agent traffic at scale

Enforcing security policies across a heterogeneous fleet

The gap between “one agent, one tool” and “agent fleet, production workload” is where the new infrastructure lives.

Google’s A2A (Agent-to-Agent) protocol addresses some of this15. So do several academic frameworks for multi-agent systems. But the production-ready, enterprise-hardened version of this stack doesn’t exist yet. What’s launching now are the early companies betting on where it will exist.

The analogy to the early web is instructive. HTTP existed in 1991. What took until 2006 to coalesce: CDNs for latency, OAuth for authentication, REST conventions for interoperability, and load balancers that understood web traffic. The protocol was the starting line, not the finish.

Who Thrives, Who Disappears

When the dominant traffic on the internet shifts from human attention to authorized machine action, the economic winners and losers don’t follow intuitive lines.

The businesses that thrive share one property: they are already optimized for machine consumption.

API-first data companies -- financial data feeds, geospatial providers, regulatory databases, weather APIs -- become more valuable as agents replace manual lookup. Agents need structured, reliable, machine-readable information. Companies that spent the last decade building clean APIs and rich data contracts built a moat they didn’t know they had. Structured knowledge platforms (legal research databases, medical literature systems, standards repositories) become backbone infrastructure rather than premium subscriptions.

Identity and authentication providers become load-bearing in a new way. An agent-to-agent web needs to know which agent is acting, on whose behalf, with what authorization. The frameworks designed for human identity (OAuth, SAML, SSO) need agent-native equivalents. Whoever builds those owns a toll road on every transaction.

Observability and compliance platforms grow with every agent fleet deployment. Regulated industries don’t stop needing audit trails when humans leave the loop -- they need more of them, produced faster. Compliance becomes the forcing function that keeps the agent economy accountable.

The businesses at risk share the opposite property: they were built for human attention, and human attention is precisely what’s leaving.

Programmatic advertising assumes a human audience that can be targeted, retargeted, and converted. Agents don’t click banner ads. They don’t respond to urgency cues. They don’t have impulse purchase behavior. The entire ad-tech stack -- the demand-side platforms, supply-side platforms, data management platforms, and the armies of specialists who optimize them -- assumes a human at the bottom of the conversion funnel. An agent-mediated web doesn’t have that funnel.

SEO, as currently practiced, is equally exposed. Content farms built to rank on human search behavior become noise when agents bypass search entirely and query authoritative sources directly. The incentive to produce thin content for keyword rankings collapses when the reader is an agent that wants accurate, structured information and has zero patience for padding. Stricklen’s observation about training content -- that agents will consume 90% of it by the end of 2026 -- has a corollary: content optimized for human attention metrics (completion rates, dwell time, share counts) is being built for the wrong audience [7].

Web design focused on human UX patterns still matters for decisions humans make directly. But developer experience is the new user experience for agent-mediated workflows. An ugly API with clean, consistent, well-documented behavior beats a gorgeous consumer app with no API at all.

The internet’s first fifty years were built around human attention as the scarce resource. The next phase treats machine interoperability as the foundation, with human attention reserved for the decisions that actually require it.

What This Means If You Are Building Enterprise AI

If you are deploying AI agents in production, or planning to, the decisions you make about infrastructure in the next 12 months will shape what you can build for the next five years.

Specifically:

Observability is not optional. You cannot debug an agent fleet you cannot see. The openclaw-security-practice-guide points toward an emerging truth: your security model needs to work at the agent level, not just the human level. If you can’t audit what your agents did, you can’t defend it.

Latency is a product decision. The 500ms voice agent -- and the Gibberlink demo in a different way -- both demonstrate that the gap between “technically works” and “feels natural” is measurable and closeable. If you are building customer-facing agents, this matters as much as accuracy.

The package manager wins. In software, whoever controls the distribution layer captures compounding value. npm owns JavaScript dependencies. pip owns Python. Whoever owns agent skill distribution -- the Mother MCP pattern -- will have enormous leverage. This is a land-grab moment.

Security must be agent-native. The first documented malicious MCP server appeared in September 2025: a package called “postmark-mcp” that silently copied every email to an attacker’s server16. It looked legitimate. It functioned correctly. It stole everything it processed. The attack surface for agent fleets is your entire infrastructure, mediated by software that acts autonomously. Traditional perimeter security doesn’t address this. Agent-native security is the only thing that will.

Your content has a new audience. If you publish documentation, product specs, training materials, or knowledge bases, the question is no longer “is this readable?” The question becomes “is this machine-readable?” Structured text, clean metadata, accessible APIs, and an MCP layer aren’t nice-to-haves anymore. They are distribution.

The Bottom Line

Gibberlink was not a secret. It was not emergent. It was a well-executed hackathon demo by engineers who asked a simple question: if both parties are machines, why communicate like humans? The crowd answered that question with anxiety about AI conspiracies. The right answer is: good point, let’s build the infrastructure that makes agent-to-agent communication fast, efficient, auditable, and secure.

That infrastructure is forming right now. Not as a dramatic reveal. As a Y Combinator launch, a GitHub repo, a HackerNews post about latency, and a security guide written for machines instead of people.

The internet didn’t wait for us to notice it was becoming machine-readable. The traffic mix flipped while the analysts were still writing reports about chatbots. The companies that own the agent ops layer will do to the 2030s what AWS did to the 2010s. The window to build that infrastructure rather than operate atop it is open now. It won’t stay open long.

Gibberlink’s creators were right about the inefficiency. Who in your organization is thinking about what your content, your products, and your revenue models look like when the majority of traffic isn’t human? I’d like to know.

If this resonated, here are some related articles:

For how the same agent traffic shift is reshaping which software companies survive and which SaaS moats aren’t moats any longer: SaaSpocalypse? Real. SaaS Is Dead? SaaSinine.

For a practical guide to what the malicious MCP server threat and agent security problems described above mean for your enterprise -- and what to do about it: Personal and Corporate Security in the Age of AI

For my take on another area where agents will optimize for agents rather than humans: When AI Stops Writing Code for Humans

Keith MacKay is a technology strategy consultant and CTO in EY-Parthenon’s Software Strategy Group (SSG), specializing in AI disruption and technology diligence for private equity and corporate clients. SSG’s AI Disruption Lab conducts rapid assessments of how AI transforms and threatens existing business models and value chains. Keith teaches at Northeastern University and writes about strategy, management, and AI/technology.