When AI Stops Writing Code for Humans

My predicted transition from human-readable to machine-native code, and what it means for every language you’ve ever loved

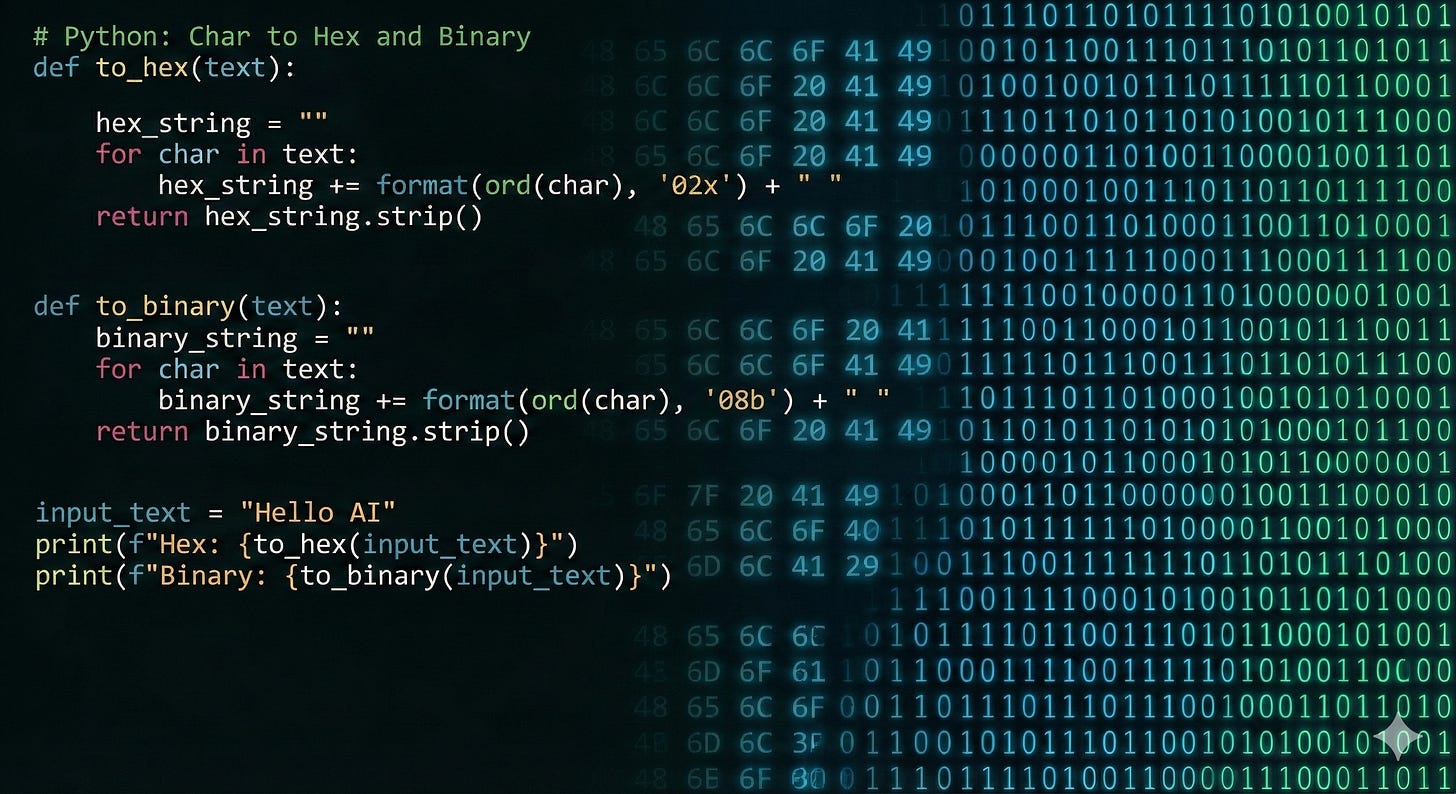

You’re watching an AI assistant write Python. Clean variable names. Helpful comments. Logical structure. It looks like something a senior developer would produce on a good day.

Now ask yourself: who is that code for?

Not the machine. The machine doesn’t care about your variable names. It doesn’t read your comments. It executes compiled instructions, and all those thoughtful abstractions exist for exactly one reason: so a human can read them later. The entire history of programming languages is a story of making machines comprehensible to people--allowing us to formalize the algorithm for a task in a way that we can understand it later when we wish to make changes. COBOL reads like English on purpose. Python’s whitespace forces readability. Every language you’ve ever used is a translation layer between what humans think and what machines do.

But if humans stop reading the code, why keep translating?

The Write-Only Threshold

Joseph Ruscio (in conjunction with Waldemar Hummer) recently coined the term “write-only code” to describe a future where AI agents produce software that humans never review1. Not because they can’t, but because it isn’t necessary. The agents plan, execute, self-correct, and ship. The code works. Nobody reads it. Nobody needs to.

We’re not there yet. But we’re approaching a tipping point: the moment code truly becomes write-only, human readability stops being a requirement.

Now, human-readability was not where we started. My first programs (about 45 years ago, give-or-take) were not human-readable. Coding was painfully slow. These programs displayed numbers and text on a six-digit 7-segment LED output, and were punched in hexadecimal machine language directly into memory on a Heathkit ET6800 Microprocessor Trainer kit that I had soldered together…it had a Motorola 6808 processor and a cardboard case, and I can still see the blue (on blue on blue on white) box vividly in my mind’s eye (I could add numbers! I could make the segments blink! I could MAKE THE MACHINE DO STUFF! Heady stuff for a kid.)

Each command had to be translated from my human-readable pseudo-code into assembly language (human-readable shorthand representations of the 72 possible machine instructions for the 6808, like “LDA 10” which meant “load value 10 into Accumulator A”), and then into the hexadecimal code for that machine instruction, which I then punched directly into memory via the hexadecimal keypad. Slow going! To eliminate this sort of pain, smart engineers at IBM wrote the first human-readable coding languages:

FORTRAN (FORmula TRANslation, i.e., science-oriented programming), and

COBOL (COmmon Business-Oriented Language, for business applications)

There is SOME (not a lot) of FORTRAN still out there, but amazingly there’s a TON of COBOL…if a Reuters graphic can be believed, there are 220 billion lines of COBOL out there, with 95% of ATM swipes still relying on it2.

I think often of how far we’ve come since those Assembly Language/Machine Language days, and how far we can go. Forty-some years later, when I used a prompt to get my first LLM-generated code (was that only 18-24 months ago!?), I could see the glimmer of a path to a world without human-readable code...though I thought it would take over a decade (I should have known better! See my article We’re Linear Thinkers in an Exponential World). When readability stops providing any value, the entire rationale for high-level programming languages gets wobbly--and I see posts daily from world-class developers who have not hand-written a line of code in months. How much of that code are they reviewing? If you are vibe-coding or context engineering, do you review every line of code? Do you review any lines of code? Many users are already in their write-only code era. I anticipate a few disasters in 2026 as we begin to encounter the inevitable implications and start building out better verification and trust tools, but I believe the track we are on is unmistakeable.

Why move to a world without human-readable code? Well, think about what we sacrifice for readability today. Every layer of abstraction between a Python script and the CPU adds overhead. Some of these layers exist purely for humans: IDEs, interpreters that let us iterate quickly, readable syntax. Others solve real engineering problems that may not vanish just because AI writes the code: garbage collectors prevent memory leaks and use-after-free bugs, compilers perform optimizations that even hand-written machine code rarely matches, runtime environments provide portability and security sandboxing. But all of them trace back to a common ancestor: the decision to write code in languages humans can read. The machine accommodates these layers. It doesn’t prefer them. And many of their functions could be handled differently (and more efficiently) if human readability were no longer a constraint.

A model writing code that only another model will maintain has no reason to write Python. It has every reason to write something closer to what the machine actually executes.

The Native Machine Language Opportunity

If AI systems could generate and maintain machine-native code (assembly, intermediate representations, or optimized bytecode) directly from natural language descriptions, the performance implications are wide-ranging.

What we’d gain:

Raw speed. No interpreter overhead. No runtime type checking. Memory managed statically at generation time (the way Rust’s borrow checker works, but without the human needing to reason about lifetimes) rather than dynamically through garbage collection pauses. Code that runs at or near the theoretical maximum the hardware allows.

Smaller binaries. Dramatically reduced dependency chains. No framework bloat. No language runtime to ship alongside your code. Programs would still make system calls and might link against OS-level libraries, but the ever-expanding dependency trees of modern package managers would shrink A LOT. Executables measured in kilobytes instead of megabytes (or gigabytes, for the JavaScript ecosystem, where node_modules has achieved a kind of terrifying interconnected-dependencies geological mass).

Hardware optimization. Code could be generated for specific architectures. ARM-native for mobile. GPU-optimized (CUDA-compliant, etc.) for ML workloads. FPGA patterns for edge computing. No abstraction layer pretending all hardware is the same.

Energy efficiency. Less computation per instruction means less power consumed. At data center scale, this matters enormously. At planetary scale, it matters ultra-mega-enormously. Mechanisms that use less power and less compute will matter a lot over the next few years as demand continues to grow exponentially but supply of power and chips does not.

The vision: You describe what you want in plain English. The model generates optimized machine code tailored to the destination platform. The program runs faster than anything a human could write in any high-level language. Another model maintains it, debugs it, extends it, and adapts it for new hardware as it is released.

I believe this is the logical endpoint of write-only code.

The Training Problem (and a Solution)

That said, we can’t do that with today’s models (yet). Current LLMs learned to code by consuming billions of lines of human-written source code from the internet. GitHub repositories, Stack Overflow answers, documentation, tutorials. The training data is overwhelmingly high-level, human-readable code.

Machine language? Assembly? Optimized bytecode? The internet has almost none of it. Developers don’t post assembly to Stack Overflow. Nobody pushes hand-optimized machine code to GitHub (well, almost nobody: a few vintage computing enthusiasts and embedded systems veterans, engineers’ engineers, have shared some of their work). The training data for machine-native code generation essentially doesn’t exist in the form that modern LLMs consume.

But … we do already have it in a different form.

Every compiled binary on every computer is a repository of machine-native code. And for open-source projects, we have both the source code AND the compiled output. Millions of programs, compiled across decades, for dozens of architectures. GCC and LLVM have been meticulously translating human intent into machine instructions since the 1980s and 2000s respectively.

The training pipeline would look something like this:

Paired corpus construction. Take known open-source codebases. Compile them with full debug symbols across target architectures. You now have source-to-machine-code pairs at the function level, with metadata linking human intent to machine output, with flavors per architecture family.

Decompilation alignment. Use existing decompilers (Ghidra, IDA Pro, Binary Ninja) to create intermediate representations. Train models on the bidirectional mapping: source to binary, binary back to approximate source.

Natural language bridging. Layer the existing NLP-to-source-code capability on top. The chain becomes: English description to high-level logic to machine-native output. Initially the model thinks in a high-level language internally, then translates. Eventually it has enough context to shortcut the middle step.

Reinforcement through execution. Unlike source code, machine code can be verified by running it. Generate candidate machine code, execute it against test cases, reinforce the patterns that produce correct, fast results. This feedback loop doesn’t exist as cleanly for high-level code generation.

Architecture-specific fine-tuning. Train specialized models for x86, ARM, RISC-V, GPU compute shaders, and other targets. Each becomes an expert in its domain.

What We Gain

The performance case writes itself. But the gains extend beyond raw speed.

Security through obscurity (real obscurity, not the fake kind). Machine-native code without source maps is genuinely harder to reverse-engineer. Today’s obfuscation tools are a nuisance for attackers. Machine-native code generated by AI, with no human-readable structure to recover, raises the bar significantly (...for humans. For any future nefarious AI agents? Not so much!).

Elimination of entire vulnerability classes. Many security vulnerabilities exist because human-readable languages make dangerous patterns easy to write. Buffer overflows in C because the language trusts programmers to manage memory correctly. Injection attacks where dynamic query construction mixes code and data (SQL injection, command injection) because languages make it easy to concatenate strings into executable statements. Type confusion in dynamic languages. A model generating machine code with built-in safety analysis could potentially enforce safety properties at the instruction level: bounds-checking memory access, separating code from data, and verifying type consistency before execution.

Optimization beyond human capability. Modern CPUs have hundreds of instructions, many rarely used because humans can’t reason about them effectively. AI models could exploit the full instruction set, including SIMD operations, branch prediction hints, and cache-line alignment that human developers ignore because the cognitive cost is too high.

Dramatically reduced dependency hell. When your model generates machine-native code, there’s no `pip install`, no `npm install`, no version conflicts between packages. The towering dependency trees that create today’s supply chain attack surface collapse into direct system-level interfaces. The code isn’t perfectly self-contained (it still talks to the OS, and complex programs may still link against system libraries for networking, cryptography, or graphics), but the attack surface shrinks by orders of magnitude. The trade-off: the model itself becomes a form of supply chain. If it was trained on compromised code patterns, that risk propagates silently. We may someday discover “training attacks” that result from injected training data.

What We Give Up

And now the price tag.

Debuggability dies. When something goes wrong (and something always goes wrong), you’re staring at hex dumps and register states. No variable names. No stack traces with meaningful function names. No “line 47 of auth.py.” Just raw instructions that meant something to the model that wrote them and mean nothing to you.

Human oversight evaporates. Code review becomes impossible in any traditional sense. You can’t review what you can’t read. This is the write-only code thesis taken to its extreme: not just code that humans don’t read, but code that humans *can’t* read. The trust model shifts entirely to testing, verification, and behavioral analysis. It’s conceivable that we would have machine-code-to-pseudocode (or to human-readable language) tools, or other AI outputs that future human or AI reviewers could read and evaluate…at least as we continue the transition.

Portability fractures. One of the great achievements of high-level languages is write-once-run-anywhere (or at least write-once-compile-anywhere). Machine-native code is inherently architecture-specific. You’d need a model to regenerate the entire codebase for each target platform. Possible, performant, but a fundamentally different workflow.

The knowledge gap becomes a chasm. Today, a junior developer can read a codebase and learn how it works. In a machine-native world, the source of truth is the natural language specification and the AI’s internal representation. If the spec is incomplete (and specs are always incomplete), institutional knowledge dies with the model version that generated the code.

Regulatory and compliance challenges. Many industries require code audits. Financial systems, medical devices, aviation software: these all demand human-reviewable code trails. Machine-native code doesn’t satisfy those requirements without entirely new verification frameworks.

The Transition Period: Language Winners and Losers

We won’t jump from Python to machine code overnight. There’s a long middle ground. And during that transition, some programming languages will thrive while others will struggle.

The core dynamic: AI models generate code token by token. Languages that express more functionality in fewer tokens have an inherent advantage. Not because tokens cost money (though they do), but because fewer tokens means less context consumed, faster generation, and fewer opportunities for the model to drift or hallucinate or experience context rot.

This creates a surprisingly clear hierarchy.

The Winners

Python stays dominant for now, but not because it’s the most token-efficient. It wins because the training data is overwhelming. More Python code exists in AI training sets than almost any other language. The model knows Python like a native speaker knows their mother tongue. Every pattern, every idiom, every library. The fluency compensates for the verbosity.

Rust emerges as a quiet winner. Its type system catches errors at compile time, which means the AI’s output gets validated before it ever runs. Fewer runtime surprises. Rust’s syntax is dense (lifetime annotations and trait bounds add verbosity), but the compiler enforces memory safety guarantees that C++ simply doesn’t provide by default. The model writes Rust well because Rust’s compiler acts as a built-in code reviewer: if it compiles, an entire class of memory bugs is already eliminated.

Go benefits from radical simplicity. Limited syntax. One way to do things. No generics-induced complexity (until recently, and even now, minimal). A model generating Go code has fewer decisions to make, which means fewer wrong decisions. Go’s verbosity is offset by its predictability.

Functional languages (Haskell, OCaml, Elixir) have a structural advantage that most people overlook. They resemble written natural language more closely than imperative languages do. Think about it: when you describe what you want in English, you describe what things are, not how to compute them step by step. “The sum of all even numbers in this list” is a specification, not an algorithm. Functional languages mirror this declarative style. The translation from English description to functional code is more direct because both describe what rather than how.

This matters more than most people realize. When a model converts “filter this list to items over 100, then sum them” into code, the declarative version maps closely to the original intent. SQL makes this most obvious:SELECT SUM(value) FROM items WHERE value > 100is practically English.

Functional languages follow a similar pattern: Elixir’slist |> Enum.filter(&(&1 > 100)) |> Enum.sum()mirrors the structure of the request even if the syntax has some ceremony. The imperative version, by contrast, requires inventing loop variables, accumulators, and control flow that didn’t exist in the original description.

The Losers

Java struggles (hey, don’t shoot the messenger!) Its verbosity is legendary for a reason. The boilerplate-to-logic ratio punishes token budgets mercilessly. A simple REST endpoint in Java consumes three times the tokens of the same endpoint in Python or Go. The model knows Java well (there’s an enormous training corpus), but every generation costs more and fills context windows faster.

C++ faces a different problem: complexity. The language has accumulated forty years of features, paradigms, and gotchas. Templates, multiple inheritance, operator overloading, SFINAE, concepts, coroutines. A model generating C++ must navigate a minefield where syntactically valid code can have wildly different semantics depending on context. The error surface is massive.

PHP and Perl lose because the models simply have less high-quality training data for them and because their syntax is idiosyncratic. Perl’s motto was “there’s more than one way to do it.” For humans, that’s freedom. For AI models, that’s ambiguity.

The Underlying Pattern

The languages that win (assuming that generative AI models continue to be built atop natural-language token-based strategies) share three traits:

Strong type systems or static analysis. They give the model (and its output) a safety net. Errors get caught before execution.

Low ceremony. Less boilerplate means fewer tokens for the same functionality. The model spends its token budget on logic, not scaffolding.

Declarative tendencies. Languages that let you express what rather than how align more naturally with natural language descriptions. The shorter the conceptual distance between the English spec and the code, the better the model performs.

This last point deserves emphasis. The best AI-friendly languages are the ones that most resemble natural language in structure. Not in syntax (COBOL tried that, and it didn’t really take in subsequent language designs), but rather in conceptual mapping. Functional and declarative languages describe outcomes. Imperative languages describe procedures. When your input is a description of an outcome, languages built for describing outcomes have a translation advantage.

The Path From Here

The transition won’t happen as a single event. It’s a gradient.

Phase 1 (now): AI writes human-readable code. Humans review it, sometimes. Models get better. Review gets rarer.

Phase 2 (near-term): AI writes human-readable code that nobody reads. The write-only code era. Tests and behavioral verification replace code review. Human-readable source persists as a comfort blanket.

Phase 3 (medium-term): AI writes optimized intermediate representations. Not quite machine code, but not Python either. Something like LLVM IR or WebAssembly: structured enough for the model to reason about, close enough to the metal for significant performance gains. Humans can theoretically read it. Nobody does.

Phase 4 (longer-term): AI generates machine-native code. Natural language in, optimized binaries out. The programming language becomes an implementation detail of the model, not a choice the developer makes.

Each phase requires new trust mechanisms. Phase 1 uses code review. Phase 2 uses testing. Phase 3 needs formal verification tools. Phase 4 demands entirely new paradigms for understanding what software does without reading how it does it.

The Training Investment

Getting from Phase 1 to Phase 4 requires deliberate investment in model training infrastructure.

The data already exists, just not in the right format. Linux distribution package repositories contain millions of compiled binaries with corresponding source packages. The Debian archive alone spans decades of compiled software across multiple architectures. LLVM’s test suites contain thousands of source-to-IR-to-machine-code examples with known correctness.

The tooling needs construction. We need pipelines that extract function-level source-to-binary mappings at scale, tools that generate diverse compilation variants (different optimization levels, different flags, different targets), and benchmarking infrastructure that measures both correctness and performance of generated machine code.

The verification challenge is solvable. Unlike natural language generation, where “correct” is subjective, machine code correctness is far more testable: run it against defined inputs, check the outputs. Edge cases exist (race conditions, floating-point precision across architectures, timing-dependent behavior), but the feedback signal is orders of magnitude cleaner than for prose generation. This makes reinforcement learning unusually effective. Generate, execute, verify, reinforce. The feedback loop is tight and, for deterministic programs, unambiguous.

The timeline is measured in years, not decades. The foundational capabilities exist. The models can already reason about code. The compilation corpus exists. The verification infrastructure exists. What’s missing is the focused effort to connect these pieces (or maybe one of the frontier model companies is already working on this...I have no visibility there).

The Bottom Line

We built programming languages to make machines comprehensible to, and maintainable by, humans. AI is removing the human from that equation. The logical consequence is code that’s optimized for machines, not for human eyes: faster, smaller, more efficient, and almost completely human-opaque.

The transition will be gradual. The training challenges are real but solvable. The performance gains are enormous. And the languages that survive the middle period will be the ones that best bridge the gap between how humans describe problems and how machines solve them: declarative, type-safe, and low-ceremony. And perhaps a few for artisanal coding and teaching purposes.

We spent sixty years building human-driven tools to get away from coding in machine language for specific hardware and enhance human readability. Over the next five years, as coding becomes essentially free, I think we’ll do the opposite — have AI write native machine instructions for specific hardware, and largely let go the human readability constraint.

Old strategy, new tools.

What’s your take? Will human-readable code become a legacy artifact with a few artisanal practitioners, or will we always need the ability to read what our machines produce? Are you seeing AI gravitate toward certain languages already? Is my take painfully misguided or missing fundamental truths? I’d love to hear what you think!

#AI #SoftwareEngineering #Programming #MachineLearning #FutureOfCoding #CompilerDesign #TechLeadership #DeveloperProductivity

https://www.heavybit.com/library/article/write-only-code, published Feb 9, 2026.