An Evolving Strategy for Knowledge Work: from Human-in-the-Loop to Human-Before-the-Loop

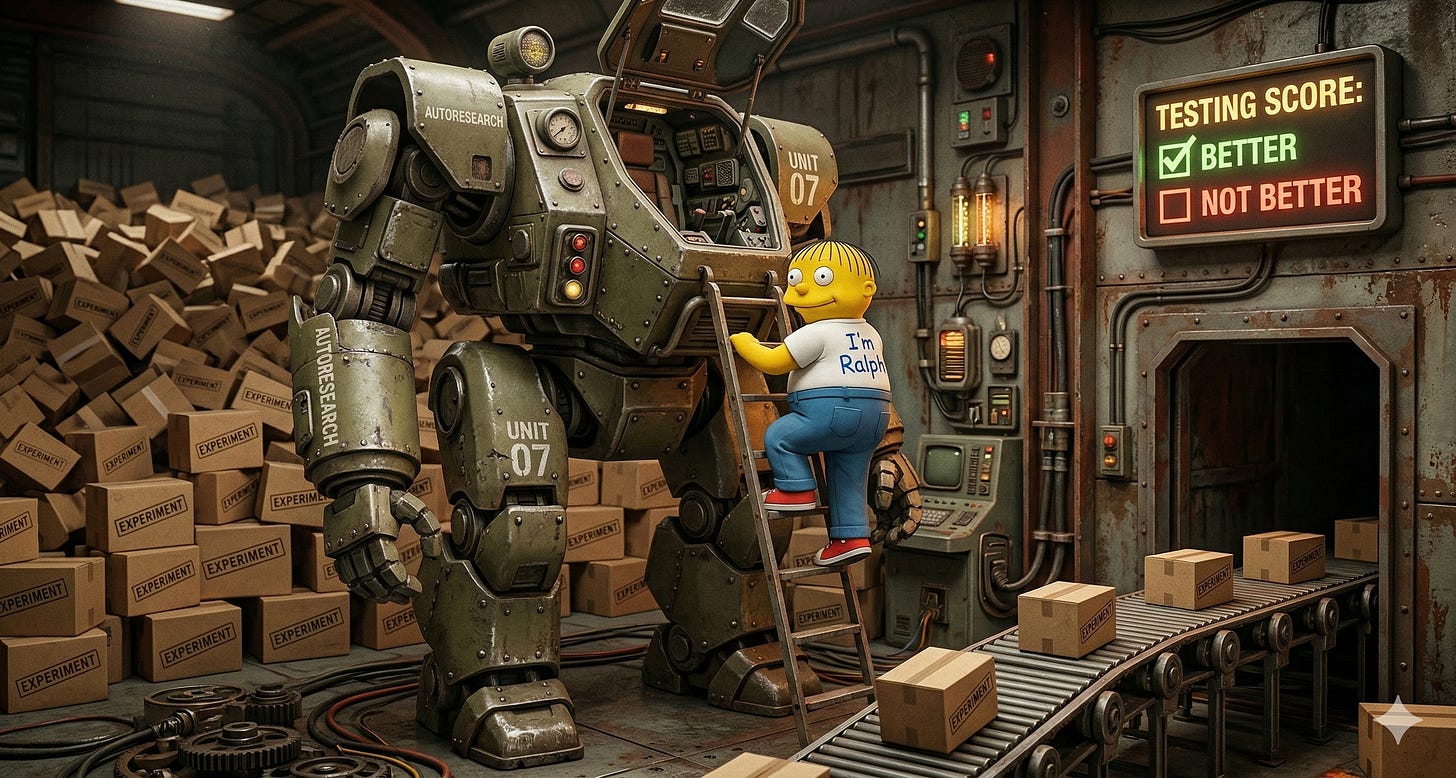

Andrej Karpathy's autoresearch project = 'Ralph Wiggum Plus' (Humans Decide/Describe, AI Tweaks/Tests on Repeat, keeping what moves toward the goal)

You described a goal last night and went to sleep. By morning, your AI researcher had run 100 experiments to chase it: trying approaches, measuring results, discarding failures, iterating again. You didn’t execute a single step of the research. You wrote a text file describing what you wanted and how you’d know when you’d found it.

This is not a thought experiment. This is Andrej Karpathy’s “autoresearch” project, released this week.1

The technical details are interesting to engineers. The strategic implication is interesting to everyone else. So let’s spend one paragraph on the former and the rest of the article on the latter.

What the Strategy Actually Is

Karpathy built an autonomous research loop around an AI training task: give the agent a goal, a codebase to modify, and a single metric to optimize. The agent proposes changes, runs short experiments, evaluates whether the metric improved, keeps the winners, discards the rest, and repeats. Roughly 100 cycles overnight, on any modern Mac with a GPU. The human’s only contribution is a document describing the research direction -- what to optimize, what constraints apply, what counts as progress. [1]

As entrepreneur Garry Tan put it: “design the arena, let AI iterate.”2

That phrase captures the strategy completely. But here’s what gets missed in much of the talk about autoresearch: while the arena Karpathy designed is for training AI models, the strategy works for anything where you can define “better” precisely enough for a machine to recognize it. That’s not a narrow category. That’s most of what knowledge workers do.

Not the First Loop--But One That Adds Power

Autonomous AI loops aren’t new. The “Ralph Wiggum” pattern3, popularized by Geoffrey Huntley in 2025, does something structurally similar: a simple loop that feeds an AI agent a prompt, checks a completion criterion after each pass, and keeps going until the task is done. Tests pass. Build succeeds. Checklist items are cleared. Ralph Wiggum is the while (not done) loop for AI agents -- widely used, genuinely powerful for task completion.

Autoresearch adds one upleveling ingredient: rather than “keep trying things and here’s how to see if you’re done”, it outlines “here’s what metric to optimize...keep tweaking things and keep things that make the metric better than before.” Call it Ralph Wiggum Plus.

Ralph Wiggum asks “are we done?” and stops when the answer is yes. Ralph Wiggum Plus asks “are we better than before?” and keeps searching as long as improvement is possible. The distinction sounds subtle, but it isn’t. A binary check works perfectly when there’s a clear finish line -- and many tasks have clear finish lines. A continuous metric works when the goal is optimization -- when there’s no finish line, just a score that can always be improved. Most serious R&D looks more like the latter than the former.

The formalized scoring is what turns a task-completion loop into a research loop. It also has been a key reason in many exercises to have a human-in-the-loop -- the human is there for judgement, to make sure things are on-track. With a scored metric, we move to human-before-the-loop...since the scoring is defined up front, the algorithm can perform the evaluation (algorithm-in-the-loop...accurate, but meta). To achieve this successfully, the human’s job is to define the scoring clearly enough that a machine can chase it overnight without asking you anything.

The Pattern Hiding in Every Knowledge Work Domain

Every knowledge-intensive field runs the same basic loop: form a hypothesis, run an experiment, measure the outcome, iterate. What differs across fields is how long experiments take and how expensive they are. The structure is identical.

This means the autoresearch pattern translates directly (or is easily extended):

Legal research: “Search these 10,000 case files for precedents matching these criteria, ranked by how closely the facts align.”

Financial scenario analysis: “Run these 50 market assumptions against our portfolio and surface the configurations that break our risk model.”

Drug discovery: “Screen these 200,000 compound variants for binding affinity to this target protein, record which one is greatest.”

Strategy consulting: “Test these 30 market segmentation hypotheses against this customer data and identify the most defensible.”

Competitive intelligence: “Monitor these 500 data sources overnight and surface anything that suggests our market assumptions are wrong.”

In every case, a human used to design the experiment, run the experiment, evaluate the results, design the next experiment, and repeat. That loop is autonomous now -- or it will be, field by field, faster than most career planning accounts for.

The human in Karpathy’s loop doesn’t research or write code, or even tell the AI what to do. Instead, they decide on and describe the goal, along with a way to measure success, in a markdown file. Then the AI tweaks things 100 times overnight and keeps whatever strategies move toward the goal.

The bottleneck has moved. It no longer sits at “who can run the experiments (or write the code).” It sits at “who can frame the right experiments to run.”

The Spec Is the Job: Defining the Search Space

Karpathy’s system makes this concrete with a single artifact: a document describing the research program.4 Not instructions to a coding assistant. A research brief -- the bounded space of hypotheses worth exploring, the success criteria the agent needs to distinguish progress from noise, the constraints that keep experiments valid.

That document is doing something most knowledge workers do inherently and may not have a name for: it defines the shape of the search space.

Define the space too broadly, and the agent wastes cycles on irrelevant territory. Define it too narrowly, and you miss the result that sits one step outside your assumptions. Get the success metric wrong, and the agent optimizes for the wrong thing and hands you 100 experiments that answer a question nobody asked.

The quality of the research output is bounded by the quality of the research question.

This was always true. A good research director was always more valuable than a fast experimentalist. But when experiments were slow and expensive, the experimentalist’s skill still really mattered -- you needed someone who could squeeze insight from a limited number of runs. When experiments are fast, cheap, and autonomous, the experimentalist’s contribution approaches zero and the research director’s work becomes the only bottleneck that matters.

autoresearch runs 100 experiments overnight. The one human contribution is a document describing what success looks like. That’s not a footnote. That’s the signal.

What Happens to Knowledge Workers

The knowledge workers who will struggle are the ones whose value lives primarily in the execution layer: running the analysis, pulling the data, drafting the first-pass synthesis, iterating on the output. Those tasks are not disappearing. They’re being absorbed into autonomous loops faster than most people’s career planning accounts for.

The knowledge workers who thrive stay (or move) upstream. Specifically:

Problem framers: people who take an ambiguous business question and decompose it into testable hypotheses. Not “how do we grow revenue?” but “which of these six customer segments show the least price elasticity, and what’s the acquisition cost differential?”

Metric designers: people who define what “better” means with enough precision that a machine can evaluate it without asking a human at every step. One number, consistent, doesn’t lie.

Constraint setters: people who know which constraints make experiments valid and which are just organizational habit. The agent runs whatever you permit. Knowing what to prohibit is expertise.

Interpreters: people who look at 100 experimental results, recognize which are meaningful and which are artifacts of the setup, and translate findings into decisions. The agent surfaces winners by its metric. A human decides whether the metric captured the right thing.

None of these are new skills. They’re the skills that separated good researchers from great ones before any of this existed. The difference now is that they’re the only skills that matter at the research level. The execution layer below them is gone.

That said, we will need to continue to hire entry-level workers...we need people who can grow and move upstream into those research director roles, and learn those skills. Hiring will continue to move from pyramid-shaped to house-shaped (or obelisk-shaped), with new training and incentives to keep the smaller number of people hired around longer.

AI’s GPS Moment

When GPS became ubiquitous, map-reading became a curiosity. The skill that mattered wasn’t navigating -- it was knowing where you wanted to go. People who couldn’t read a map were fine if they had GPS. It was only people who didn’t know their destination who were lost.

Autonomous research loops are the GPS moment for knowledge work. The navigation is handled. The destination is still on you.

The skills you will need in a world of autonomous research are an ability to write the research brief that describes the goals of your work and the scoring criteria for success.

The good news: framing good research questions is learnable. It’s practiced by getting obsessively precise about what you’re actually trying to find out, what would count as a good answer, and what constraints bound the search. It’s the habit of separating “what are we testing” from “how are we testing it” before you touch any tools.

This differs by domain. Ask yourself: what’s the single metric that would tell an autonomous system -- without asking you -- whether an experiment succeeded? If you can answer that, you’re already thinking like a research director. That’s the job description that survives.

The Bottom Line

Karpathy’s autoresearch runs 100 experiments overnight on a single machine. The human contribution is a document describing what success looks like. That ratio -- 100 (or 1000) machine runs from one human brief -- is the shape knowledge work is taking. The people who thrive in it aren’t faster experimentalists. They’re better question-framers, metric-designers, and search-space-architects. The lab may never sleep, but it still requires a human who will decide goals and describe success.

What’s the single metric that would tell an autonomous system, without checking with you, whether an experiment in your field succeeded? I’m genuinely curious whether people in non-technical fields can define it as precisely as Karpathy did--and what it reveals about their domain if they can’t.

If this resonated, here are some related articles:

For why humans keep underestimating autonomous AI’s rate of improvement (so 100 experiments overnight feels surprising today but won’t in two years):

We’re Linear Thinkers in an Exponentially-Changing WorldFor the argument that the highest-leverage AI skill is precisely the writing ability that lets you specify what you want clearly enough that a machine can chase it: The Most Important AI Skill Isn’t Technical

For my prediction about how software development specifically will evolve toward a similar human-out-of-the-loop strategy:

When AI Stops Writing Code for HumansAnd if you want an AI skill that makes sure your work is as clear as possible and optimized for LLM understanding:

github: plsfix Skill - Clarity for AI

And a few from my colleagues:

If you’re a coder and you want to see an alternate (and killer) autocoder loop with a similar mindset, check this out by Laird Popkin. It’s awesome!

Autocoder: Turning Claude Code into a Self-Driving Software Engineering teamAnd two views of how the workforce is changing as AI is further integrated from my colleague Michael Stricklen:

First, overall workforce changes:

The Machines Are Moving Faster Than We Are. What Happens Next?...and for agentic coding in particular, how frameworks are moving toward a developer-as-conductor model:

The Conductor’s Loop: Naming the New Core Discipline of Software Engineering, and Building the People Who Can Do It

Keith MacKay is a technology strategy consultant and CTO in EY-Parthenon’s Software Strategy Group (SSG), specializing in AI disruption and technology diligence for private equity and corporate clients. SSG’s AI Disruption Lab conducts rapid assessments of how AI transforms and threatens existing business models and value chains. Keith teaches at Northeastern University and writes about strategy, management, and AI/technology.