When Writing Software, the Typing Was Never the Job. Neither Is the Prompting.

With AI coding, the coding steps got easier. The thinking steps got harder.

You asked for a feature. The AI built it. It runs. It passes the tests. And it’s completely wrong -- architecturally backwards, solving a problem you don’t have. The code is fine. Your spec wasn’t.

This is not a prompting problem. It’s not a communication problem. It’s a mental model problem.

Keyboard time is down 70%, 80%, or more. The loop closes faster, the boilerplate disappears, the first draft shows up before you’ve finished your coffee. But the mental work didn’t shrink. It shifted left.

What Coding Actually Was

Before AI, a good developer’s job was never primarily about typing. The keyboard was the final step in a process that looked something like this:

You received a problem. You spent time -- reading documents, in meetings, in debate with peers, in the shower, on a run, staring at a whiteboard at 11pm -- building a mental model. How does this system actually work? Where does this feature live in the architecture? What constraints are non-negotiable? What are the three ways this could go wrong and which one is most likely? If you’ve ever heard a developer say that they did their best work on their commute or while they were drifting off to sleep, they were talking about building a mental model.

Many aspects of building that mental model came from prior coding experiences. Coding metaphors used in the past. Knowledge of the problem domain that didn’t appear anywhere in the problem statement. Tribal knowledge mentioned in shorthand from other developers that told you volumes (”X built that.” “Oh, so it’ll be overcomplicated with no comments.”; “Don’t forget the Y release!” “Right, I need to test for that weird edge case.”). Understanding the things that customers or the client prefer that aren’t anywhere in the spec.

Then, and only then, did you sit down to code. The typing was the translation. You were converting a mental model you had already built into a language the machine could execute. My own typical strategy was to outline the code I was about to write in comments, sketching out the broad strokes and big picture, then filling in the details. Great developers wrote clean code because they had accurate mental models. Mediocre developers wrote confusing code because their mental model was incomplete -- and the code faithfully reflected that incompleteness.

Peter Naur -- 2005 Turing Award winner, contributor to ALGOL, co-creator of Backus-Naur Form -- made this argument formally in 1985. His essay “Programming as Theory Building” [1] proposed that a program is not the primary product of development. The primary product is the shared mental model -- the “theory” of the problem held by everyone who built it. The code is a lossy projection of that theory onto a machine. When the team disperses, the theory disperses with them. What remains is the map. The territory it described lives only in the heads that built it.

The map is not the territory. Development teams with a shared mental model produce tighter code with fewer bugs -- not because they’re smarter, but because the map matches the territory better. The code reflects what the team actually understood, not just what they managed to write down.

The keyboard was where the model became visible. It was never where the real work happened.

What AI Coding Actually Is

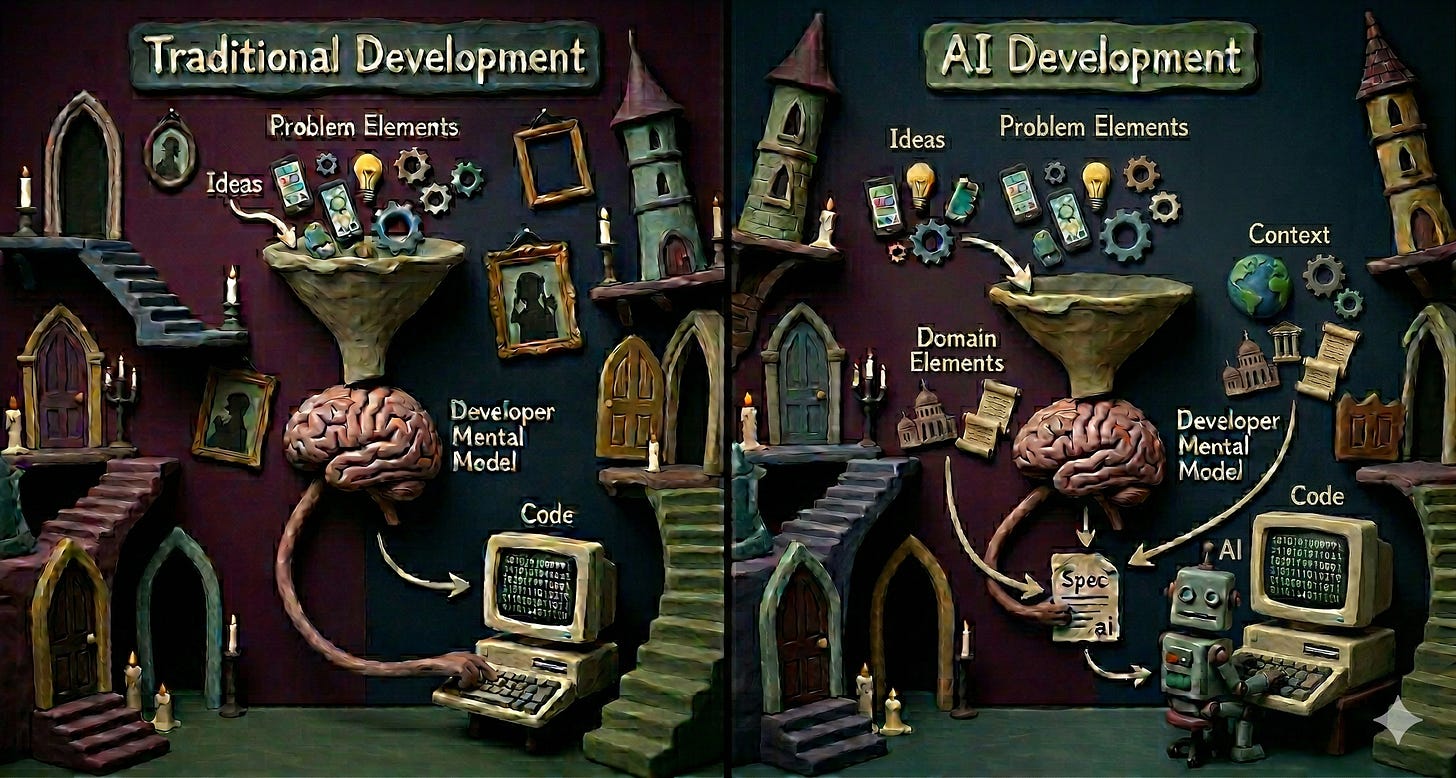

AI coding requires those same elements, but handled differently and expanded.

The translation layer has changed: instead of converting your mental model into code, you convert it into a specification that the AI converts into code. But that chain is not quite that straightforward: the mental model you need to build before writing the spec is significantly wider than the one you needed before writing the code yourself.

Traditional coding required one mental model: the problem model. How does this work? What does it need to do? How does it fit the existing system?

AI coding requires three.

The problem model. Same as before. Understand what you’re building and why. Know the edge cases. Anticipate the failure modes. Nothing changed here except that you now have to externalize all of it in writing instead of keeping it in your head and translating on the fly. That requires good communication skills AS WELL AS an accurate mental model of the problem. And time at the left end of the process that the developer didn’t used to spend.

The domain model. In. the past, experienced developers absorbed this intuitively -- background knowledge, never named, never documented. What patterns are canonical in this codebase? What libraries are in use? What architectural decisions from three years ago does the new feature have to respect? AI coding forces it to be explicit. The AI has three options: you provide domain info in the spec, the AI infers it from the existing codebase, or it invents its own conventions. The third option produces code that is technically correct but leaves out huge swathes of the territory.

The context model. This is the new one: the model of everything an experienced developer would know inherently about your specific situation that the AI doesn’t know and can’t infer from the code.

A developer’s mental model is earned. It’s built from specific bugs that bit them, architectural decisions that aged poorly, business rules that exist for reasons no longer in any document. They have lived in the territory.

The AI has very good maps. It has seen more code than any human ever will. But its “model” is a statistical distribution over training data -- a sophisticated average of what code usually looks like in similar contexts. It will fill the gap between its training and your situation. It always fills the gap (that is its whole job!). The question is whether it fills it with your intent or with the most plausible answer drawn from everything it has ever seen.

Plausible and correct are not the same thing. They converge when your situation resembles the training distribution. They diverge -- sharply -- when your system has constraints, conventions, or history that no external codebase would know about.

The context model is your map of that gap. It answers: what will this AI get plausibly wrong about our situation? What conventions does it default to that we’ve specifically rejected? What background does it need to make the right call, rather than the statistically reasonable one?

(Every developer who has watched an AI confidently build the wrong thing -- technically correct, architecturally backwards -- has just discovered the context model they forgot to write.)

Why Specs Fail

Bad specifications are not a communication problem. They’re a mental model problem.

A specification is a lossy compression of a mental model. The AI decompresses it. Every error in the output is a decompression artifact -- a place where the spec didn’t carry enough information, and the AI substituted something from its training instead of your intent.

This is why “write better prompts” is incomplete advice. You can be an excellent writer and still produce a bad spec, if the mental model behind it is incomplete. The limiting factor isn’t vocabulary or clarity. It’s the width and accuracy of what you understood before you started writing. Clarity, completeness, and appropriate granularity are always the order of the day, but (as with all communication challenges) knowing your audience and truly understanding your topic (i.e., having an accurate mental model) are keys to success.

The reason vibe coding produces inconsistent results is structural. You’re asking the AI to fill not just implementation details, but architectural decisions, domain constraints, and context gaps -- simultaneously, from a prompt that captured none of them. Sometimes it guesses right. The batting average reflects the size of the gap, not the capability of the model.

Every gap in your spec is an invitation for the AI to improvise. Sometimes improvisation is fine. Often it isn’t. The difference is whether you know where the gaps are.

The Mental Model Is Now Your Primary Output

Thinking about spec-writing as “making a mental model visible” changes who the most valuable developers are.

The old hierarchy put a premium on implementation skill: algorithmic thinking, language fluency, the ability to hold complex systems in working memory and navigate them efficiently. Those skills still matter, both for the domain model and for the problem model. But they’re no longer the whole picture.

The whole picture now includes mental model quality -- specifically, the ability to construct a model wide enough to span all three dimensions and precise enough to survive two translations: from your head into a spec, and from the spec into code. Call it the telephone problem. Each translation is lossy. Every ambiguity in your spec is noise introduced at translation one. Every gap in the AI’s training that it fills with a statistical default is noise introduced at translation two. The original message degrades at each step. The only protection is to start with a model that’s wider and more precise than the final output needs to be.

Senior developers just got more valuable. Domain expertise is the raw material of the domain model. You cannot specify what you don’t understand. The developer who has spent five years in a codebase carries a domain model that a junior developer with better prompting skills cannot replicate from documentation alone. As LLMs improve and absorb more domain-specific training, this advantage will compress. For now, it still carries real weight.

The developers who are struggling right now are the ones who built their identity and their value around implementation fluency -- the joy and precision of the hands-on-keyboard moment -- and haven’t yet internalized that the mental model is where their expertise actually lives. The keyboard was always a vehicle. It just wasn’t the only vehicle.

The developers who are thriving are the ones who have realized that what they were always doing was building models of reality and converting them into executable form. The conversion format changed. The model didn’t.

What This Looks Like in Practice

Writing a good spec now means answering questions that developers used to answer in their own mental models and implement in their code on-the-fly, without recording the question or the decision anywhere:

What is the invariant this feature must never violate?

Which existing pattern does this follow, and where does that pattern live in the codebase?

What would a reasonable developer (human or AI) guess wrong about this system, and what does it need to know to guess right?

Where does my intent diverge from the obvious interpretation of my instructions?

This is not easier than writing code. It requires a different kind of rigor -- not the rigor of syntax and runtime behavior, but the rigor of explicit articulation. You are writing for a reader that will take you literally and might not ask a good follow-up question if your spec is ambiguous.

The developers who were always good at explaining their thinking to other humans -- in code reviews, in architecture discussions, in requirements sessions -- tend to adapt faster. The ones who did their best thinking in private, at the keyboard, translating directly from intuition to syntax, face a steeper curve.

Where AI’s Map Diverges from Your Territory

The gap between AI’s statistical model and your mental model isn’t fixed. It varies by domain, by codebase, and by the specificity of what you’re building.

In a well-trodden domain -- standard web application, familiar framework, conventional patterns -- the AI’s training distribution is close to your territory. The statistical completion is often right. The maps mostly match.

The divergence grows as your situation becomes more specific:

Novel or niche domains where training data is sparse and the AI’s priors are built on the wrong examples

Heavily regulated industries where constraints are non-standard and a plausible-but-wrong answer carries real cost: healthcare, legal, defense, finance

Mature codebases with accumulated decisions -- systems where the “why” behind architectural choices never made it into any document

Deliberate departures from convention -- your team rejected the obvious approach for a reason, and without that context in the spec, the AI rediscovers the obvious approach every session

The depth of context model your spec needs scales directly with how far your territory sits from the AI’s map.

The Bottom Line

Coding was always about building mental models of a problem and translating them into something a machine could execute. AI didn’t change that. It widened the model(s) required -- from problem-only to problem plus domain plus context -- and changed the translation target from mental-model-to-code to mental-model-to-specification (and then leaving it to the AI to handle specification-to-code).

The keyboarding got faster, but the thinking got harder, and the documentation got more explicit.

The developers who will define the next wave of software are not the ones who write the best prompts. They’re the ones who build the most complete models before they write anything at all.

The AI has very good maps. The question is whether your spec gives it the territory. What’s the worst “plausible but wrong” answer an AI has produced for your team -- and what did the spec miss?

References

If this resonated, here are some related articles:

For why writing detailed implementation plans -- specs that assume the implementer has “zero context and questionable taste” -- is one strategy for good AI coding sessions: Fabricate, Collaborate, Elaborate, Delegate, Validate

For the broader argument about what share of software production work actually consists of coding -- and why “100% AI-written code” is real but overstated: What “100% of Our Code Is Written by AI” Actually Means | Substack

The orchestration skills described here as necessary developer add-ons are largely product management skills in disguise: The Best AI Engineers Are Product Managers | Substack

Keith MacKay is a technology strategy consultant and CTO in EY-Parthenon’s Software Strategy Group (SSG), specializing in AI disruption and technology diligence for private equity and corporate clients. SSG’s AI Disruption Lab conducts rapid assessments of how AI transforms and threatens existing business models and value chains. Keith teaches at Northeastern University and writes about strategy, management, and AI/technology.

#AI #SoftwareEngineering #DeveloperProductivity